Model Collapse: When AI, Markets, and Regimes Breathe Their Own Exhaust

We are all trapped inside a deteriorating LLM called TrumpGPT.

Model Collapse

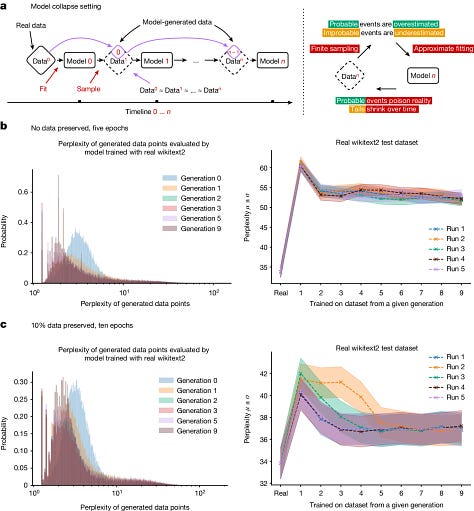

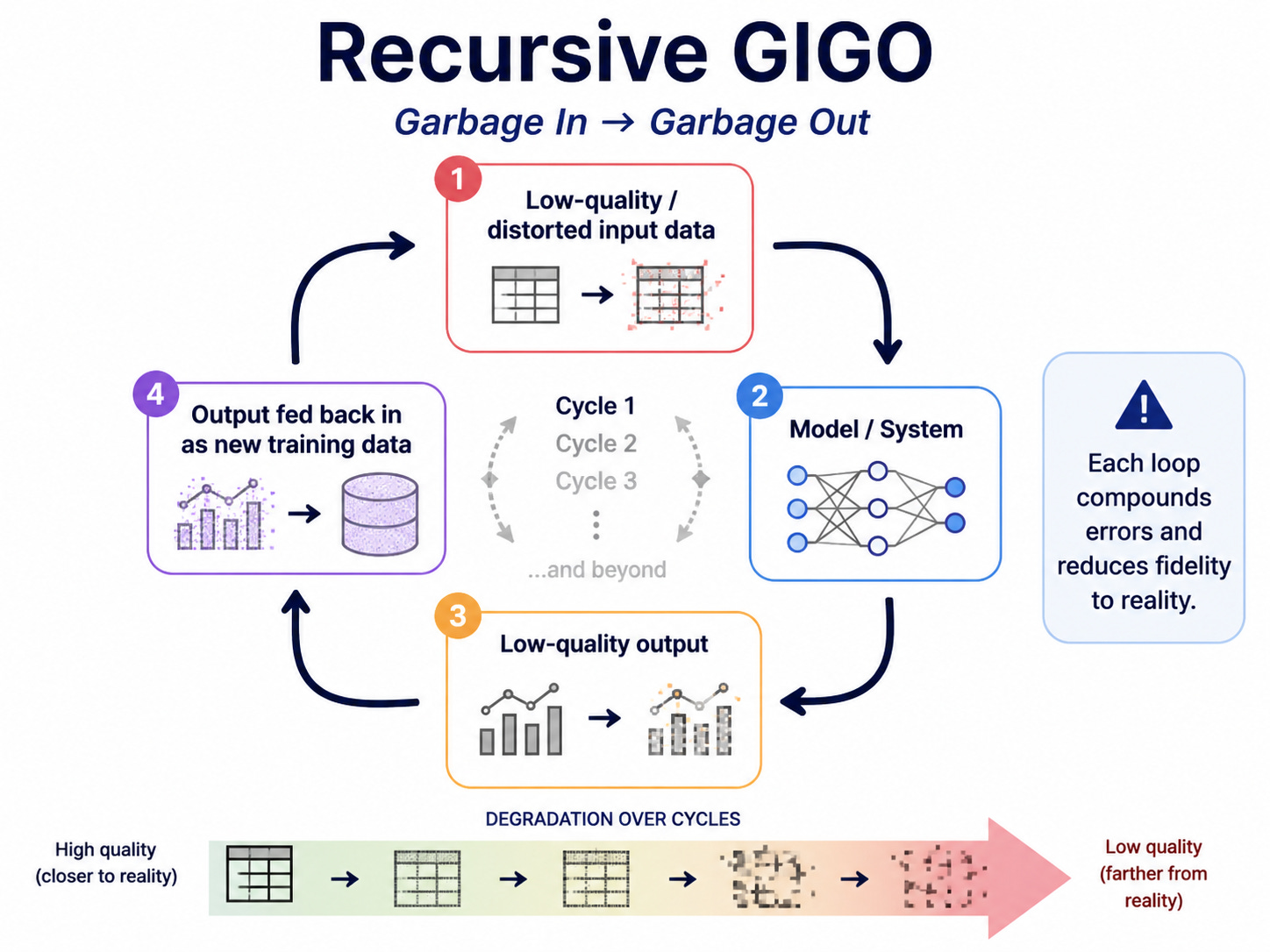

When large language models (LLMs) like Claude, Gemini, Grok, and ChatGPT are recursively trained on model-generated data, hallucinations and errors compound, the models grow increasingly disconnected from reality, and finally undergo model collapse—a state where they may confidently output nonsense.

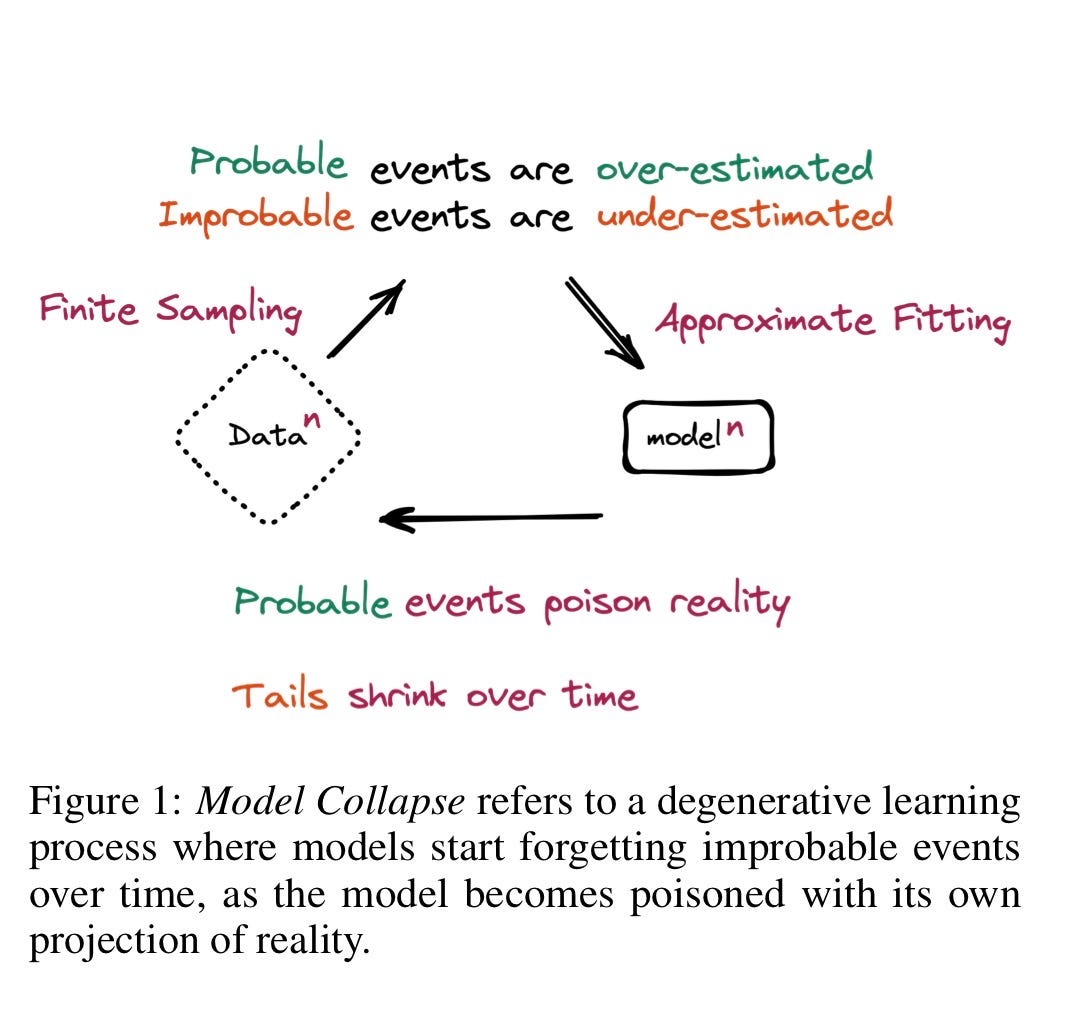

“Model Collapse refers to a degenerative learning process where models start forgetting improbable events over time, as the model becomes poisoned with its own projection of reality.” —Shumailov, et al.

Think of it as being put in a sealed plastic bubble—you will be able to breathe fine at first. But slowly you will be breathing in more and more of your own exhaust—exhaled carbon dioxide—and your systems will break down.

Model collapse is not a new discovery. The industry has known about it for several years.

This problem is fatal to the world-domination plans of the AI industry, which has been marketing the promise of near-infinite growth to its investors and customers since the LLM fad began. But model collapse is also a perfect metaphor for the dynamics driving the industry as a whole, and the American federal government.

Garbage In…

One of the most famous axioms in computer science is garbage in, garbage out (GIGO).

Simply put, if you put bad data into a calculation, the results of the calculation are meaningless. It is a trick of the human mind that just because something comes out of a complex system—like a person, or a chatbot—it automatically means you should trust it.

But when GIGO is recursive—when the garbage out is recycled to be the garbage in—the relationships between the data progressively break down. Ultimately, it degenerates to a pile of near-random bits.

The solution to this problem may seem simple: Don’t train a chatbot on its own output. Only use human data.

But what if you don’t have any more human data? What if you have already consumed nearly the entire written corpus of humanity? What then?

We’re finding out right now because that is the scenario the AI industry is currently facing. The central lie of Sam Altman (OpenAI), Dario Amodei (Anthropic), Jensen Huang (Nvidia), Elon Musk and others who promote LLMs as an infinite growth opportunity is: the bigger you build the models and data centers, the better they become. Forever.

That’s only true if you have infinite data, in which case you don’t need LLMs in the first place. The reality is that human knowledge is limited, and chatbots cannot go outside what they are given. That’s why you don’t see new AI-discovered science, or see new software platforms created by AI—despite the hype.

All LLMs do is run statistics on existing data. And the useful data is almost entirely used up.

Garbage Out

One workaround the AI industry embraced to show the appearance of growth is synthetic data—to use the output of chatbots to train new chatbots. This is the exact scenario that creates model collapse. While it can work as a stop-gap for certain highly structured domains, like math and code, ultimately you cannot get blood from a stone. LLMs will never replace humans because it’s just a useful search tool for intelligence, not intelligence itself.

For most of the domains that people actually use chatbots—recipes, legal advice, research, writing, etc.—unverified synthetic data is poison. Once an error is introduced to the pool as a fact, it gets compounded. And so the output becomes less and less reliable. But it also means over time the output of LLMs sounds more and more similar. They are all converging on a gray middle because they’re all consuming each other’s exhaust.

It’s not just training data; now chatbot pollution is all over the internet. AI slop is a growing percentage of the output of humanity online. And as that percentage increases, the accuracy of future chatbots will be further reduced—creating a recursive trap for their operators.

Industry of Slop

The idea of being in an echo chamber is nothing new. Every cult, ideology, and identity group has a formal or informal system which enforces the boundaries of its followers’ beliefs. But sometimes an echo chamber can be an emergent phenomena in those who may not share much except a singular goal. This can create strange bedfellows—like Silicon Valley nerds, global financial markets, and the Trump regime teaming up to inflate the AI bubble.

For example, this is an executive at Anthropic, which is currently seeking to go IPO at somewhere around a trillion dollars, speculating that within 30 months chatbots will start “building themselves.”

This is just silly, an extension of the concept of the technological singularity—a moment after which technology will create itself and humans will become extinct or be forced to merge with machines. There is no evidence that this will happen, or can ever happen with current technology, and an increasingly irrefutable set of data that says quite the opposite: for LLMs, things will only get worse.

Even AI boosters and billionaires who are heavily invested in AI can’t help but notice the core problem. LLMs are unreliable, randomized token predictors. They don’t “know” anything. They don’t learn. And they’re just about as good as they can ever be.

The AI industry has not just been recycling its own ideas to create a mythology about its future; it is also recycling its own financial exhaust to create a fantasy about its solvency. The circular, recursive financing of the LLM craze has created the most concentrated stock market in history—trillions of dollars in alleged deals, all within a small incestuous group.

45% of the S&P 500 is exposed to “AI.” Keeping the myth of infinite growth intact is existential to the entire economy at this point.

But when myths and mathematics collide, things have a way of resolving in one direction. This is an intelligent yet intelligible video summarizing the problem with LLMs and synthetic data:

Regime Collapse

Model collapse due to “forgetting the true underlying data distribution” and becoming “poisoned with its own projection of reality” is almost too perfect a description of both the AI industry at large and the information echo chamber masquerading as the U.S. government.

An AI will collapse if it trains on its own exhaust. An industry will collapse if it believes its own marketing. And a government will collapse if it believes its own propaganda.

In political science, when an authoritarian government becomes unstable because people are afraid of giving its leader bad news, it is known as the dictator’s dilemma.

The classic dictator’s dilemma is that the same fear and repression that protect an autocrat also corrupt the information he needs to rule.

Brutal leaders like Idi Amin, Saddam Hussein, and Muammar Qaddafi suffered from this problem because they engendered mortal fear. No one gave them bad news out of self-preservation.

But the Trump regime has taken the dictator’s dilemma into the 21st century. Trump’s entourage doesn’t just hide bad news from him; it manufactures propaganda around whatever he says so it appears to him that the world constantly rearranges itself to mirror his alternate reality.

The White House is a funhouse mirror of the inside of Donald Trump’s mind.

Sycophancy

One of the biggest problems with LLMs is sycophancy. A chatbot can be trained to make you feel like a genius, even if you’re speaking nonsense. It will simply mirror your ideas in the most flattering way possible regardless of whether they make sense. This can create a psychological dependency, and in some cases “AI psychosis.”

Similarly, Trump’s reality is generated by layers of sycophancy. His handlers ensure no outside information reaches him except what he wants to see—or serves their own purposes. And he spends hours every night consuming his own exhaust on his personal social media site “Truth Social”—which functions as narcissistic supply for his ego.

Today’s event in the White House below was a stark and nauseating example of how his bubble works. Trump brought a bunch of children in as props for an Executive Order regressing presidential physical fitness tests back 20 years. And he took the opportunity to relitigate his personal grievances and display his grandiosity—without the slightest consideration for those around him.

“And unfortunately, bad things happened. It was a rigged election. And I said, well, I’ll do it again. I had the ultimate fall. I did so well. And I had the ultimate fall. And we won in the landslide. We won every single swing state… On June 14th, we’re going to have the UFC. And the biggest fight they’ve ever done by far-- hardest ticket I’ve ever been involved with. And they’re building a 5,000-seat stadium right in the front of-- right by the front door of the White House.”

TrumpGPT

The difference in the faces of the adults who were trying to humor the president during his diatribe, and the faces of the kids who have no idea what he’s talking about, represents the difference between two completely different realities.

In the children’s reality, the model of the world is still being formed. Kids are information sponges and pattern matching devices. They reality-test by instinct. So this strange old man in a different world makes them cringe and wince as they try to process his ranting.

However, the sycophants around him have been trained, much like a flawed AI, to simply mirror him, regardless of his compounding errors.

We are all trapped inside a deteriorating LLM called TrumpGPT: a ruling chatbot trained on a lifetime of grievance and sycophancy, consuming his own exhaust, and bringing the entire system around him to model collapse.

“The result of a consistent and total substitution of lies for factual truth is not that the lie will now be accepted as truth… but that the sense by which we take our bearings in the real world… is being destroyed.”

—Hannah Arendt, “Truth and Politics”

If you haven’t upgraded to a paid subscription, please consider it! I never hide important information behind a paywall, so I rely on my readers for support. Thank you for reading and sharing!

If you’d like to help me with expenses, here is my DonorBox. 💙

Here are a few benefits to upgrading:

Live Zoom call each Sunday

Ability to comment and access all content

Wonderful, supportive community

Helping independent journalism fill in the gaps for our failing media

If you’d like to help with my legal fees: DefendSpeechNow.org.

Bluesky 🦋: jim-stewartson

Threads: jimstewartson

The entire situation is freaking scary.

Thanks Jim, for a great description of what we are all seeing but can’t necessarily define as you have. Much appreciated!